Introduction

In an era where data is the new oil, organizations need robust systems to collect, process, and transform raw information into actionable insights. This is where data engineering comes in—the unsung hero behind every successful AI model, business intelligence dashboard, and real-time analytics platform.

In this blog post, we’ll explore:

✔ What data engineering is and why it matters

✔ Key components of a data pipeline

✔ Real-world applications across industries

✔ Best tools and practices for scalable data infrastructure

What is Data Engineering?

Data engineering is the discipline of designing, building, and maintaining systems that collect, store, and process data at scale. It ensures data is accurate, accessible, and optimized for analytics, machine learning, and business operations.

Data Engineer vs. Data Scientist

| Data Engineer | Data Scientist |

|---|---|

| Builds data pipelines | Analyzes data for insights |

| Focuses on infrastructure (ETL, databases) | Focuses on ML models & statistics |

| Ensures data reliability | Uses data to drive decisions |

Without data engineers, data scientists would have no clean, structured data to work with!

Key Components of a Data Pipeline

A well-architected data pipeline includes:

1. Data Ingestion

- Tools: Apache Kafka, AWS Kinesis, Fluentd

- Gathers data from APIs, databases, IoT devices, and logs.

2. Data Storage

- Data Lakes (AWS S3, Azure Data Lake) – Store raw, unstructured data.

- Data Warehouses (Snowflake, BigQuery) – Optimized for analytics.

3. Data Processing

- Batch Processing (Apache Spark, Hadoop) – For large, scheduled jobs.

- Stream Processing (Apache Flink, Kafka Streams) – Real-time data handling.

4. Data Transformation (ETL/ELT)

- Cleans, enriches, and formats data for analysis.

- Tools: dbt, Apache Airflow, Talend

5. Data Orchestration

- Manages workflow dependencies (e.g., Airflow, Prefect).

Why Data Engineering Matters

1. Powers AI & Machine Learning

- Without clean data, AI models fail. Data engineers prepare training datasets.

2. Enables Real-Time Decision Making

- Example: Fraud detection in banking requires sub-second data processing.

3. Improves Business Efficiency

- Automated pipelines reduce manual errors and save hundreds of hours.

4. Scalability for Growing Data Needs

- A well-designed pipeline handles terabytes to petabytes of data.

Real-World Use Cases

1. E-Commerce (Personalization)

- Problem: How does Amazon recommend products?

- Solution: Data pipelines track user behavior → ML models generate suggestions.

2. Healthcare (Predictive Analytics)

- Problem: Hospitals need early warning systems for patient risks.

- Solution: Real-time data pipelines analyze vitals and EHR data.

3. FinTech (Fraud Detection)

- Problem: Detecting fraudulent transactions in milliseconds.

- Solution: Streaming data pipelines + AI anomaly detection.

4. IoT (Smart Cities)

- Problem: Managing traffic flow using sensor data.

- Solution: Data engineers process millions of IoT data points per second.

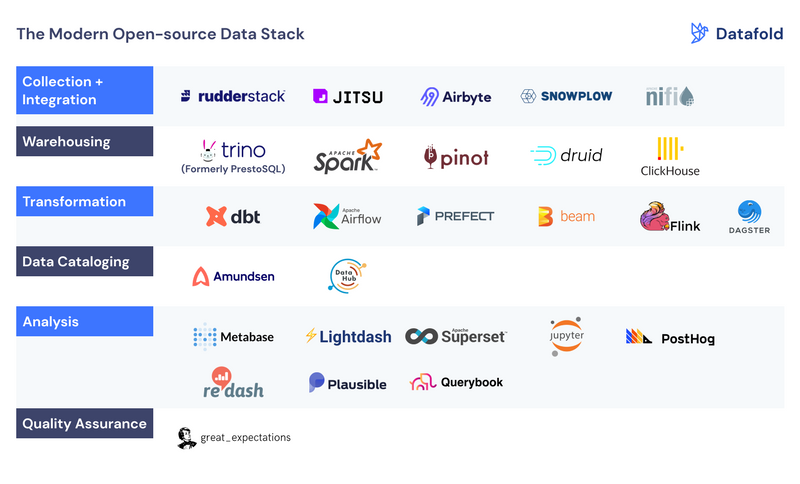

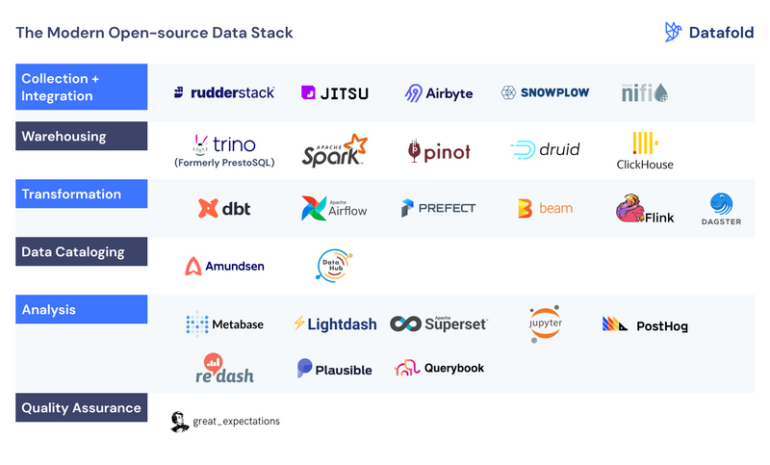

Top Data Engineering Tools in 2025

| Category | Leading Tools |

|---|---|

| Data Ingestion | Kafka, AWS Kinesis |

| Batch Processing | Spark, Hadoop |

| Stream Processing | Flink, Kafka Streams |

| Data Warehousing | Snowflake, BigQuery |

| Orchestration | Airflow, Dagster |

| Transformation | dbt, Dataform |

Best Practices for Building Data Pipelines

- Design for Scalability – Use cloud-native solutions (AWS, GCP, Azure).

- Ensure Data Quality – Validate, monitor, and log errors.

- Optimize Costs – Use serverless where possible (e.g., AWS Lambda).

- Prioritize Security – Encrypt data, enforce access controls.

- Document Everything – Metadata management is key!

The Future of Data Engineering

- AI-Augmented Pipelines: Auto-fix errors, optimize queries.

- Data Mesh Architecture: Decentralized, domain-oriented data ownership.

- Real-Time Everything: More streaming over batch processing.

How DevJunctions Can Help

At DevJunctions, we build scalable, efficient data pipelines tailored to your business needs—whether you need:

✅ A modern data warehouse setup

✅ Real-time analytics infrastructure

✅ ETL/ELT automation

✅ Cloud migration (AWS, Azure, GCP)

🚀 Let’s turn your data chaos into actionable insights! Contact us today.

Final Thoughts

Data engineering isn’t just a technical necessity—it’s a competitive advantage. Companies investing in robust data infrastructure today will lead tomorrow’s data-driven economy.

Need a custom data solution? Get in touch with our experts for a free consultation.